There seems to be a fundamental misunderstanding of the purpose of review aggregators: things like a movie’s Rotten Tomatoes score, Metacritic score or Cinemascore. While they absolutely all serve an important function in interpreting the reception movies receive from critics and the public, by and large, none of these are very well understood by the people trying to make use of them and are often used to prove something that they were never designed to measure in the first place.

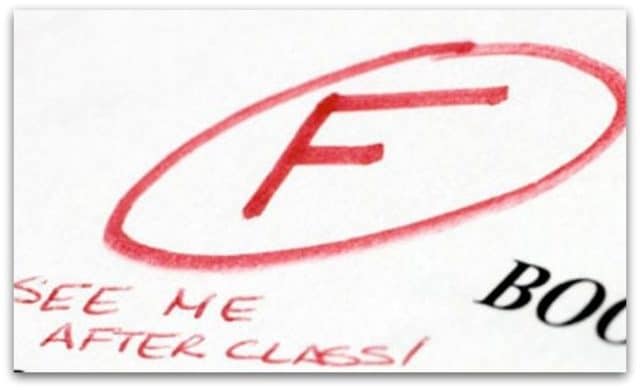

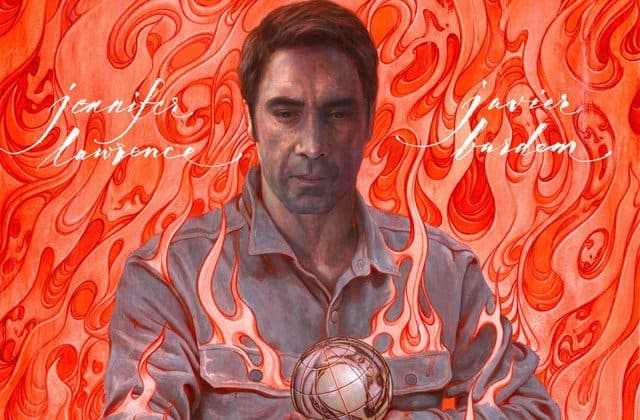

Although the release of Mother! this weekend draws the differences in what these scores measure and how the public uses them in sharp relief, it’s a subject that I’ve wanted to touch on for a while now. Just because Mother! got an “F” cinemascore doesn’t mean that it poorly review: not really. That’s because a movie’s cinemascore was never meant to measure how well liked — or, ostensibly, how “good” — a movie is.

Actually, let’s take a closer look at cinemascore, the crux of a lot of people’s arguments that Mother! is a bad movie. This score is taken from movie-goers right after they leave a theater: giving them no time to reflect or think about what they just saw. It’s an initial, gut-reaction that’s meant to measure one thing and one thing only: how well a movie was advertised to the public.

Think about it. Any amount of “reviewing” — even casual banter between friends — requires at least some amount of time to think over what you just saw. A lot of movies require time for their quality (good or bad) to sink in, which is why most reviewers, myself included, don’t hammer out a review immediately after seeing a film. They went, maybe sleep on it, giving it time to percolate inside of them before putting their thoughts into words.

Cinemascores don’t allow for that by design. They simply measure what people the second the credits roll and the house lights come on. What it does allow for — by measuring this initial reaction — is how closely a movie matched a person’s expectations for it. In other words, it determines whether people got what they expected to get out of a movie: if its advertising was accurate, honest and clear about the experience the studio was selling.

So when Mother! received an “F” cinemascore, it wasn’t that people were necessarily saying that it was bad — although more than a fair number of them certainly thought so — it’s saying that the marketing for the film left audiences with mismatched expectations for it. More than disliking the movie, they were disappointed by what they were promised they were spending their money on.

Rotten Tomatoes is probably the most commonly used review aggregator on the internet, and the one that people seem to be most confused about. Some people seem to think that the site puts out the score themselves: as if this is a reflection of how much the website itself (or at least its editorial and writing staff) liked or disliked a movie.

This is absolutely not the case. A Tomato Score isn’t crafted by the site itself: it’s merely a reflection of what reviewers, on the whole, thought about the movie. Beyond that, it’s not even a measure of how well a movie was liked, but by what the broadest possible reaction to it was.

Rotten Tomatoes doesn’t look at how well or poorly a reviewer liked a movie. It simply determines whether the review was generally positive or general negative, then samples as many of these binary reviews as possible. The percentage is simply “how many critics generally liked this movie” without any consideration being given to the degree to which they enjoyed it.

This is why a movie like Annabelle: Creation has a 68% rating on the site. It’s not that the site thought that it deserves that score, nor than people like the movie especially well, but that, very broadly, people enjoyed the movie. By that token, Mother!, which also got a 68% rating, was just as broadly liked.

Metacritic is the exact opposite of Rotten Tomatoes. Rather than measuring how many people liked a movie, it measures how much any given critic liked it, then averages that total into a single score. If everybody somewhat enjoyed a movie — enough that they “liked” it, but didn’t think all that much of it in the end — then it could have a high Rotten Tomato score, but a low Metacritic score.

This is why the scores always appear to be different from what Rotten Tomatoes posts: because it offers a fundamentally different measure of a movie. This is why Annabelle: Creation‘s score drops from its respectable RT score and Mother! rises to much more universal praise.

None of this is to say that you should or should not feel a particular way about a given movie. This certainly isn’t a defense of Mother! in particular. This isn’t to say that your gut reaction to seeing something for the first time is necessarily wrong, or that any one review aggregator is better or worse than any other.

These scores all measure different things about a movie’s perceived quality, and they shouldn’t be used beyond the specific, inherently narrow context of what they are intended to reveal about a movie. Mother! wasn’t well advertised (F cinemascore), but it was fairly widely liked (68% RT score) and outright loved by many people who saw it (74 MC score). To use these scores otherwise is to entirely miss the point of why they’re published in the first place.

Follow Us

Follow Us