While retro gaming certainly has plenty of good things going for it, during the early years of gaming things were much more primitive, with many things we take for granted today not being possible at the time. Here are 10 reasons why retro gaming was bad when compared to modern gaming.

10. Low Budget Commercials

There have been some iconic video game commercials over the years, especially the Super Nintendo commercials featuring Rik Mayall. But, there were also plenty of really low-budget commercials which didn’t make a game appealing to potential buyers. Today, trailers that are released online have replaced traditional commercials, and usually, just show off cinematics and gameplay. Check out some iconic commercials below.

9. Hardware Limitations

There will always be hardware limitations in gaming. Every time a new console or piece of hardware is released, developers will be limited as they try and push beyond current capabilities. But, hardware was much more primitive in the early years, meaning most games were incredibly basic or had a lot of limitations applied, such as lower frame rates or draw distances. These were done to try and allow for a better visual and gameplay experience at the cost of certain elements. This was evident in games such as Star Fox which had a low frame rate and draw distances, as well as many N64 games which ran at under 30fps, with many PAL region games running at 20fps.

8. Early Copy Protection

Piracy protection is a major part of gaming today, with developers trying their hardest to stop pirates from cracking the game and distributing it for free. While many games still get cracked upon release, it is more difficult than it was in the early years. In the 80s and 90s, it was incredibly easy to get around copy protection to allow for pirated games to be played. Developers did implement some funny measures by breaking the game if a pirated copy is being played, but over time these were worked around too. Piracy will always be a part of the entertainment, but developers will continue to try and fight it in a never-ending battle.

7. Bad Translations

For a long time, developers didn’t put much effort into localization when bringing games to the west from Japan. This led to some really bad translations, such as elevator being spelled wrong in Resident Evil 2, as well as the infamous “All your base are belongs to us” in Zero Wing. As gaming grew in popularity in the 90s we started to see developers hire localization teams to accurately translate games, and this largely isn’t an issue today.

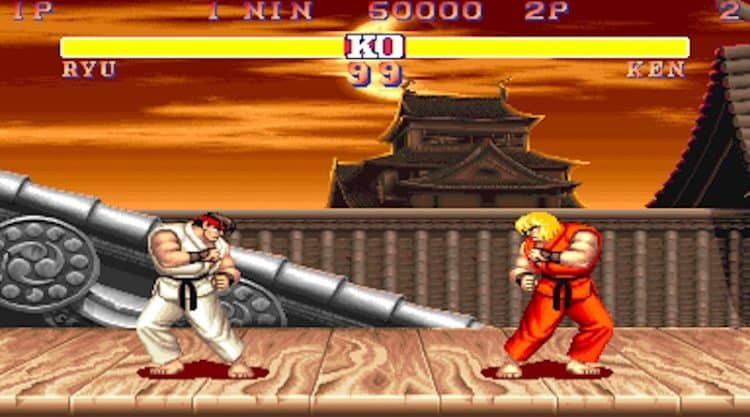

6. Insane Difficulty Curves

Video games today are generally a lot easier than they were 30 years ago, although there are exceptions such as Dark Souls. But, many games hold your hand with extensive tutorials and a much more forgiving difficulty curve. Try to play Street Fighter 2 and 5 on the same difficulty setting and you will see the difference in difficulty from the same series. During the earlier years of gaming, many games were incredibly difficult, with some titles such as Battletoads being next to impossible for the average gamer. This was potentially done because most games were much smaller during the early years, with many being able to be completed in under one hour, so by ramping up the difficulty they keep players engaged for longer and provide more replayability.

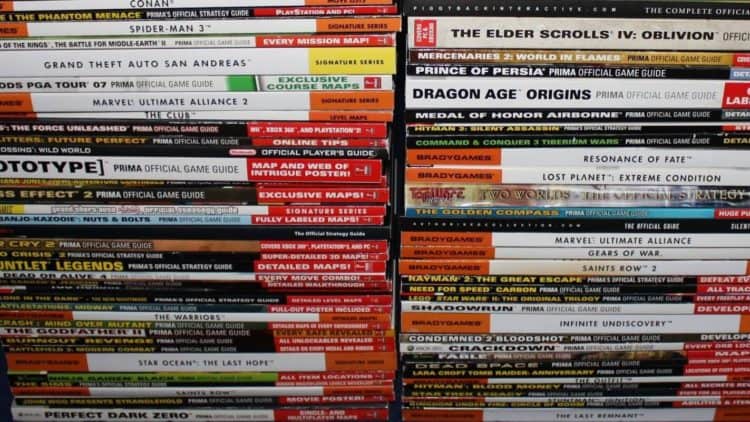

5. Lack Of Guides And Walkthroughs

Before the internet, finding a guide for a game was either incredibly difficult or expensive. Some companies produced guides for many games and became incredibly popular with gamers, and some magazines also released their own guides. But, for the most part, we were completely alone to figure out how to complete a game. Once the internet came about sites like GameFAQs became popular and allowed gamers to create their own guides to share with other players.

4. No Patches Or Updates

Although this was seen as a good thing during my list of why retro gaming was good, it can also be a downside. Before the Xbox 360 and PS3, console games had no way of receiving patches and updates, meaning what was released was what you got and any issues in the game couldn’t be fixed after release. There were a few instances of games being updated, such as Nintendo releasing an updated version of Super Mario 64 in Japan, the same version we got in the west, and Capcom releasing five versions of Street Fighter 2 to update the game, typically a game never saw an update. While this meant that developers had to ensure the game was perfect and players got the complete experience, some games were ruined due to issues that couldn’t be fixed, which led to players simply not purchasing those games.

3. Games Were More Expensive Compared To Today

For a long time, most games have cost around $40-60 to purchase, even during the 90s, with some games even costing $75-90 on the SNES. With the release of the PS5 in 2020, many releases have now become $70 despite backlash from players. While this is a lot of money now, it was even more 30 years ago. Teenage Mutant Ninja Turtles: Turtles In Time cost $75 upon release in 1991, which adjusted for inflation would cost $150 today. Can you imagine paying $150 for the latest AAA release? I know I wouldn’t play half the amount of games I do if I had to pay that much.

2. No Saving Your Game

It is hard to imagine playing a game today and not being able to save your progress. Can you imagine playing a game such as GTA V or Skyrim and having to start a new game every time you turned off your console or PC? A battery was added to some games on the NES, which allowed for progress to be saved, later becoming standard practice in future generations. But, before that games either had a password system or simply didn’t allow for any way to start where you left off. Many games were incredibly short during this time, but if you weren’t able to complete it in one sitting then you would have to repeat the levels over and over again, although that was a great way at improving your skill in the game, I think we can all agree that saving is essential in gaming today.

1. Lack Of Reviews

With the internet becoming a large part of most of our lives, finding out whether a game is good or not doesn’t require much effort. Once an embargo lifts for a new game, dozens of reviews go live and sites such as Metacritic help curate them all together so you can get an idea of whether the game is worth your time and money. Before the internet though, finding out whether a game was good or not was much more difficult, with it being next to impossible during the early days of gaming. This allowed plenty of low-quality third-party games to flood the Atari 2600, which led to the video game market crash in 1983. Thankfully, magazines became popular in the following years, which allowed gamers to find out from critics whether a game was good, and now the internet makes it even easier.

Follow Us

Follow Us